There’s a problem brewing in the workplace – employees want to bring to work aspects of technology that they use in their personal life, be it their mobile phones, laptops or even just specific applications.

If businesses haven’t come up against this consumerisation already, the chances are that they will, sooner rather than later – and that in all probability it is happening already behind their backs.

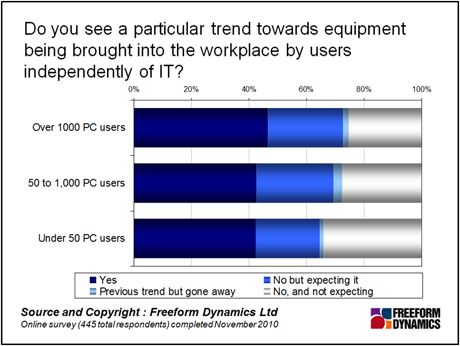

Recent research around desktop equipment shows just how this is starting to pan out. While there is nothing that IT would prefer more than a locked down world that is easier to manage, personally owned technology is either being brought in to the business by employees already, or there is an expectation that it will be in the future, for large and small companies alike (see chart below).

To be fair, the consumerisation of IT is a problem that has been around for a while. But every time something newer and shinier comes along – the iPad or the Galaxy Tab, for example – the debate is resurrected yet again, and usually more vigorously than the last time. So how should businesses approach this thorny area?

From a user’s perspective, making use of advanced technology in the form of smartphones, PCs, slate devices, and so on, is an integral part of everyday life. Of more importance is that the relationship between users, their devices and services can be incredibly close. It is perhaps unsurprising, that they want to use this kit in the workplace, as it is often more valued in design terms and performance compared to standard office issue equipment, users are familiar with it, and arguably, because of this, it allows them to be much more efficient. And if they are willing to spend their own money in the process, the capex budget might be cut some slack, providing any company kit already purchased for them is properly redeployed in the business.

But that’s only one side of the story. From a business perspective, allowing carte blanche on what equipment is brought into the business is a bit like leaving the front door to the office wide open, and not even bothering with the burglar alarm when no-one is there. Without adequate preparation and precautions being put in place, it just isn’t a very clever thing to do, and for a number of very good reasons.

Support and repair of such devices can become a major area of concern – in particular defining what can and cannot be supported, and where the boundaries of responsibility lie when things go wrong. Liability is another thread – who is liable when a corporate application causes problems with the user’s own software, or more worryingly, when user acquired software is used illegally in a work situation?

Then there is the issue of security, with users connecting into company resources with who-knows-what security in place. The likelihood of malware getting in rises considerably when inadequately protected systems are employed. Giving users free rein implies that they are all sufficiently competent to manage IT risks and security. However, our research shows this is far from the case.

Attempting to stop the influx of any devices and access to ‘community’ applications will, in all probability, fail miserably. So, like it or not, compromise is needed. But how should businesses go about deciding what’s in and what’s out?

The list of equipment, applications and services will depend on the needs of the business, but also has to take into account what makes users tick from a technology standpoint. What this boils down to is understanding rather than assuming what users need and want, and looking at if and how these needs and wants should be incorporated into the business.

So, if a handful of users want to use an iPhone for work purposes, what are the risks, benefits, cost of support and so on. If the argument doesn’t stack up in favour, are there close alternatives that might be offered. Or if there are more than a few users in the iPhone camp, does it make sense to add it to the company list and support it accordingly. Similarly, with social media and collaborative applications such as Facebook – what is the relative importance to the company, and what business-focussed alternatives can be offered?

This is a move away from how things have been done traditionally, but it isn’t about giving users the freedom to dictate what IT should be in place. Rather, it is about making sure that they aren’t ‘putting their own IT in place’ without company sanction.

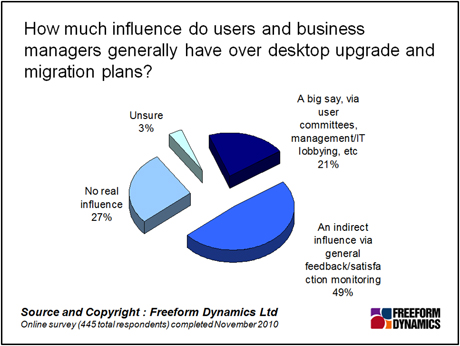

Many businesses are already being more proactive in their acknowledgement of users’ needs and wants, either through routes such as user committees and management/IT lobbying, or more indirectly, through general feedback and satisfaction monitoring, as our recent research into desktop computing mentioned earlier (see chart below).

Elements of this will probably be a pretty big irritant to IT, particularly those who believe that if you let users have control over things they will break them – always have and always will. Possibly, but then that’s not so different from what happens now? And if it is their own ‘thing’ then maybe they will be a bit more careful.

Through our research and insights, we help bridge the gap between technology buyers and sellers.