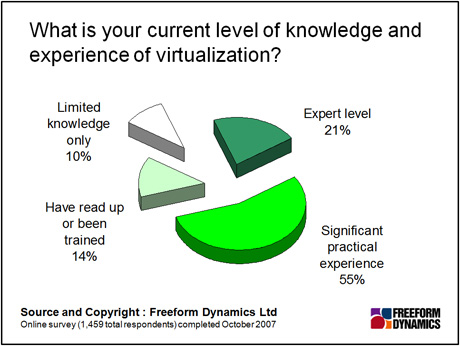

Indisputably, server virtualization has a lot going for it. The challenge does not lie in its faults, given that no technology is perfect. Rather, when we researched this topic we found that the level of competence and/or experience around virtualization is not particularly high.

So what could go wrong? Top of the list – given the gung-ho way that some organisations are rolling out server virtualization – is the potential for proliferation of virtual machines, and subsequent virtual server sprawl. As one Reg reader has pointed out: “Virtualization does save a kicking from the Finance Director.” But we have also been told how the cost savings may well be storing up manageability issues down the line.

At the moment we are probably still in more of a consolidation phase than a proliferation phase (let us know if you disagree) – but the danger with VMs is they can be just too easy to create. There can be such a thing as too much resource, or indeed too much flexibility, in how that resource is allocated.

Sprawl does not have to be an issue in its own right, unless one counts the potential for running non-essential workloads, “just because we can”. (Answer: switch them off). The real challenge comes when we start to think about how to maintain all the software assets in that highly dynamic environment we know as the data centre. Saying ‘yes’ to each user demand may grate when one thinks about how exactly all those new machines are to be operated.

Patch work

For a start, we have to think about patch management. All operating systems require updates for reasons of security, bug fixes, new features and the like, and it’s never quite as simple as just applying a patch and seeing what happens (things can tend to stop working that way). From a patching perspective, the simpler (i.e. the less machines) the better – less to test, less to go wrong, and indeed fewer dependencies to manage.

Even if things stayed relatively static, server proliferation can cause problems of software asset management and licensing. Knowing exactly what is running where is a challenge for all but the smallest, most efficiently managed data centres – and indeed, the chances are pretty good that one or two unlicensed copies of a given package will be running somewhere on the network through no fault of anybody.

It doesn’t take much of a leap to imagine what happens when virtualization comes into play. If a package is licensed on a VM that is sitting idle on a disk somewhere, does it count? Or indeed, if two VMs created to test different configurations of the same software package end up running on different physical servers for a couple of weeks until someone realises, should we be hurling the CIO into jail? The loopholes and complexities are legion, and many software vendors remain behind the curve when it comes to dealing with them.

Dream within a dream

Finally (for this article anyway) we have the “dream within a dream” effect of virtualization. While some vendors might maintain that the virtual environment exists in its own right, a management bubble that can be dealt with independently of everything else, few organisations will have the luxury of having a separate team whose role, remuneration and training is oriented solely around what’s happening in the virtual world – if indeed that’s what is wanted.

More likely is that the two worlds must be managed alongside each other as a hybrid environment: physical and virtual rubbing along as best they can. If this is the case, better to integrate management with what is already there – rather than having to learn another set of independent tools. We may be a long way from the ’single pane of glass’ aspiration, but yet-another-management-framework is likely to be the last thing anybody wants.

Bringing things full circle, the biggest danger at the moment is not so much whether things can go wrong or become more complex – such as IT. Rather, given that skills and experience around virtualization remain low, the danger is that we create costly problems for coming years, in the race to save money this quarter.

Through our research and insights, we help bridge the gap between technology buyers and sellers.